According to the tag ML algorithms, the following results have been found:

Speculative Inference Algorithms for LLM

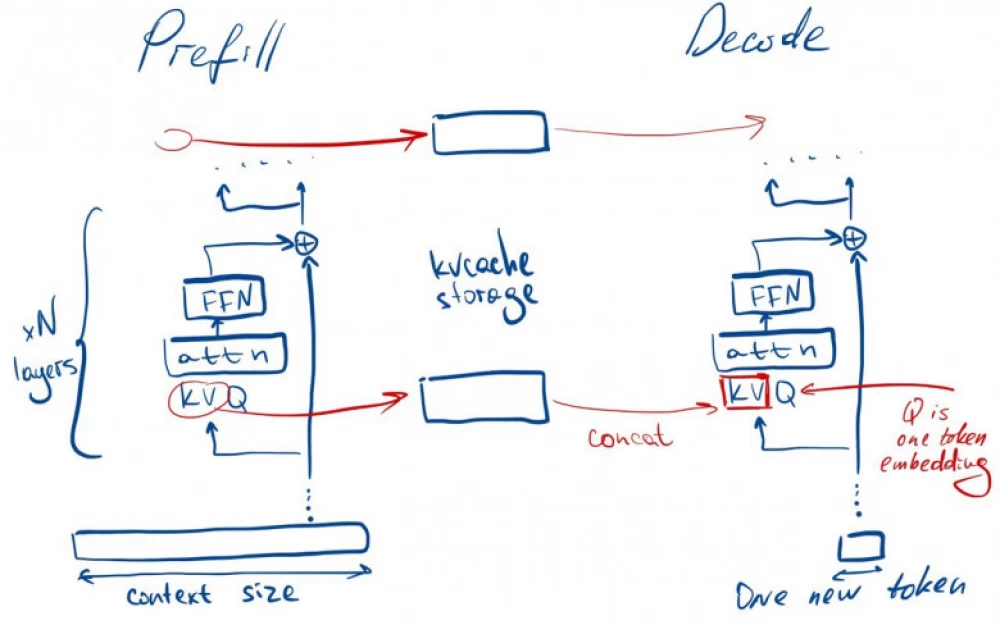

In recent years, the quality of LLM models has significantly improved, quantization methods have become better, and graphics cards more powerful. Nevertheless, the quality of generation still directly depends on the size of the weights and, consequently, the computational complexity. In addition, text generation is autoregressive - token by token one at a time, so its complexity depends on the size of the context and the number of generated tokens.