According to the tag training, the following results have been found:

Large language models are used everywhere: they generate the appearance of cars, houses, and ships, summarize round tables and conferences, come up with theses for articles, mailings, and presentations. But with all the "perks" of implementing AI, one should not forget about security. Large language models are attacked in various sophisticated ways. In the top news about neural networks are multimillion-dollar investments in protection against prompt injections. Therefore, let's talk about what threats exist and why investors pay big money to create such businesses. And in the second part of the article, I will tell you how to protect against them.

We moved away from traditional online training and created a product that solves specialists' problems. Interactive simulators and AI make learning useful, engaging, and effective.

Hello, this is Yulia Rogozina, business analyst at Sherpa Robotics. Today I translated for you an article about a startup that created a platform for 3D data generation without a team of 3D specialists. I invite you to get acquainted with a possible business idea, because the main market the company considers is the USA, but in Russia there are exactly the same needs.

Will we ever be able to trust artificial intelligence?

Hello! I am Georgiy, a developer from the team that created Unidraw. I will tell you the story of how we were looking for a tool for joint sessions on a virtual board. At first, we deployed an open-source solution, but then our load grew so much that we had to write our own. The article is about how the product started, what it is now, and what we want it to be in the future. There will be technical data, beautiful templates, and the story of our main mistake.

Irish full-time bug hunter Monke shares tips on conscious hacking and a selection of tools that simplify vulnerability hunting. As a bonus, a list of useful resources for those interested in the topic of bug bounty.

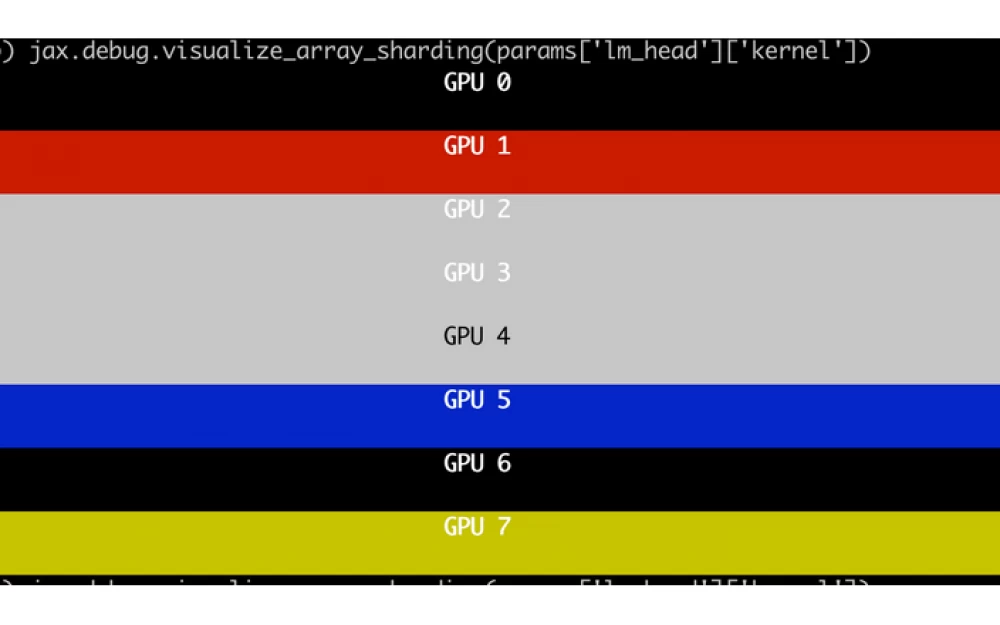

Open-source models are becoming more voluminous, so the need for reliable infrastructure for large-scale AI training is higher than ever today. Recently, our company performed fine-tuning of the LLaMA 3.1 405B model on AMD GPUs, proving their ability to effectively handle large-scale AI tasks. Our experience was extremely positive, and we are happy to share all our work on GitHub as open-source.