- Security

- A

Overview and market map of ML protection platforms

Security Vision

It is obvious that various threats and security risks may arise in different components of such a system and stages of the process.

Here are examples of some of them:

1. An attacker can disrupt the functioning of the system or compromise the security of the information circulating in the system by altering the code of libraries and software components of the ML system.

2. An attacker can deceive the model by manipulating input data, causing it to produce incorrect responses (disruption of the functioning of the ML system, extraction of sensitive information from the model's training data or even its weights, or from the model's execution environment if the model has access to the environment).

3. An attacker can compromise the software components that produce model training, poisoning its data and leading the ML system to dysfunction or desired behavior.

4. An attacker can embed malicious code into the model's weights or in the model's code as a whole by modifying them.

5. An attacker may leave pre-trained models with embedded behavior beneficial to them in open sources.

Therefore, we will further consider key approaches and methods for protecting machine learning models.

1. Anomaly Detection for ML

One approach to protecting models is the detection of anomalies in the input data of ML models and in their behavior, that is, in their output data. This can involve using both simple logical rules and other ML models. In any case, the rules and models will classify the input/output data of the ML model as "normal" and "anomalous/malicious" (adversarial). The question that arises in this context is whether such a model can be universal for different models and tasks? There is no definitive answer; it can be partially asserted that for models of the same modality (that is, the nature of the data: text, images, or logs of certain systems), anomaly detectors may be universal, but such methods of a more general nature likely do not exist. It is also worth noting that detector models may be architecturally similar to the protected model.

2. Security Tuning of Models (Security Tuning)

One of the key methods for improving the resilience of ML models to attacks is fine-tuning models on so-called adversarial examples/data – data that causes incorrect behavior of the model. Malicious data represents specially created examples that lead to various negative consequences, such as a sharp decline in quality, undesirable behavior (especially in the case of LLMs), data leaks from the training dataset, and so on. Fine-tuning on data with malicious modifications helps to improve the model's resilience and reduce the likelihood of successful attacks of this kind.

Therefore, we will now review the key approaches and methods for protecting machine learning models.

3. Protection by Noise

One of the methods of protection against attacks targeting the model's output data (such as degrading their quality or extracting confidential information from them) is adding noise to the data. For example, this would work against a "model inversion" attack. This is an attack where an attacker restores the training data from the model's responses. Adding noise to part of the features, rounding numbers, and using other anonymization methods reduces the likelihood of a successful inversion attack, but this approach is not effective for all tasks: specifically, not for classification tasks.

4. Model Scanners for Identifying Software Vulnerabilities

One of the key tools for ensuring security is scanners, such as HL ModelScanner. These solutions allow the identification of software vulnerabilities in the code, vulnerabilities in the architecture of ML models, revealing susceptibility to both code injection attacks in software components and attacks conducted through manipulation of input data.

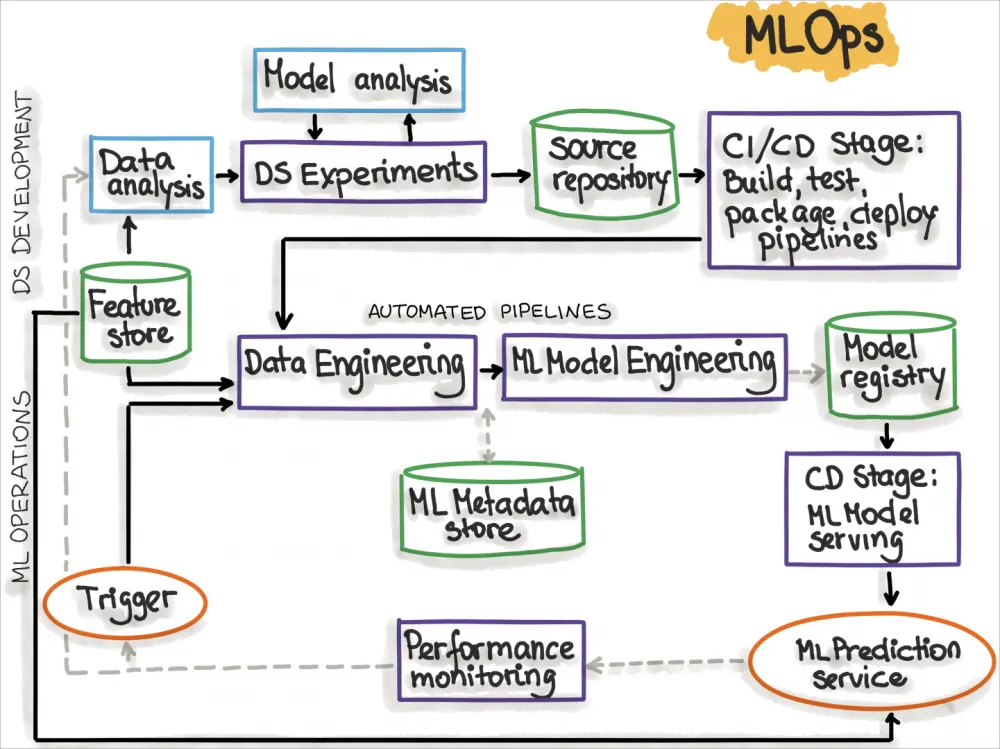

5. Implementation of MLSecOps Principles

In the context of ML security, ensuring the safe development of models plays an important role. There are several approaches to ensuring MLSecOps, which can be explored through the following links: frameworks databricks, Snowflake, Huawei, cyberorda:

· Integrate data encryption mechanisms at all stages of their lifecycle, including encryption at rest and in transit, to ensure data protection from unauthorized access.

· Implement strict access control to data and models, setting up multi-level authentication and access rights management to ensure that only authorized users can interact with sensitive data and models.

· Apply data anonymization methods, such as differential privacy, and use secure computations to minimize the risks associated with disclosing individual information when processing and analyzing large volumes of data, especially in collaborative environments involving multiple organizations.

· Include methods for counteracting machine learning attacks (adversarial) in the model development and training process, such as training with maliciously altered examples, which improves the models' resistance to input manipulation.

· Regularly conduct security audits and penetration testing to systematically identify and eliminate vulnerabilities in the infrastructure, new data, and new ML models.

· It is important to consider the existence of platforms such as MITRE ATLAS, which provide detailed information on tactics and techniques for AI attacks. Integration with such platforms helps protect ML models from a wide range of threats.

For a more detailed understanding of the current state of the market, let's consider two protection mechanisms in specific products: Bosch AI Shield and HiddenLayer MLDR.

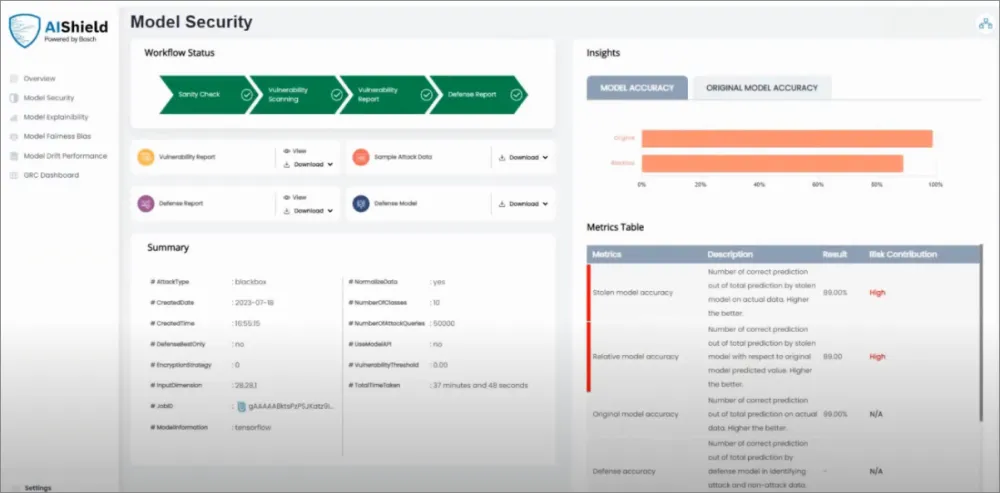

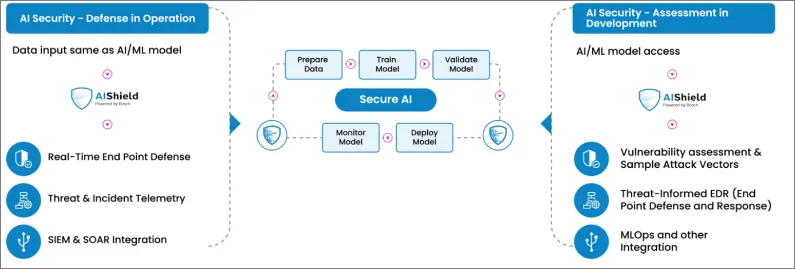

Bosch AI Shield

Bosch AI Shield is a powerful platform for protecting AI systems, providing security through vulnerability assessment and advanced security mechanisms. The platform offers SDKs and APIs that seamlessly integrate with existing ML workflows such as AWS SageMaker and Azure ML. The platform also supports integration with monitoring systems and confidential computing platforms like Fortanix.

The key features of Bosch AI Shield include:

Vulnerability Assessment: The platform performs deep analysis of model vulnerabilities, including theft, poisoning, evasion, and inversion attacks. This allows for early detection and mitigation of vulnerabilities.

Integration with MITRE ATLAS: Bosch AI Shield integrates with MITRE ATLAS, enabling tracking and analysis of current tactics and attack methods.

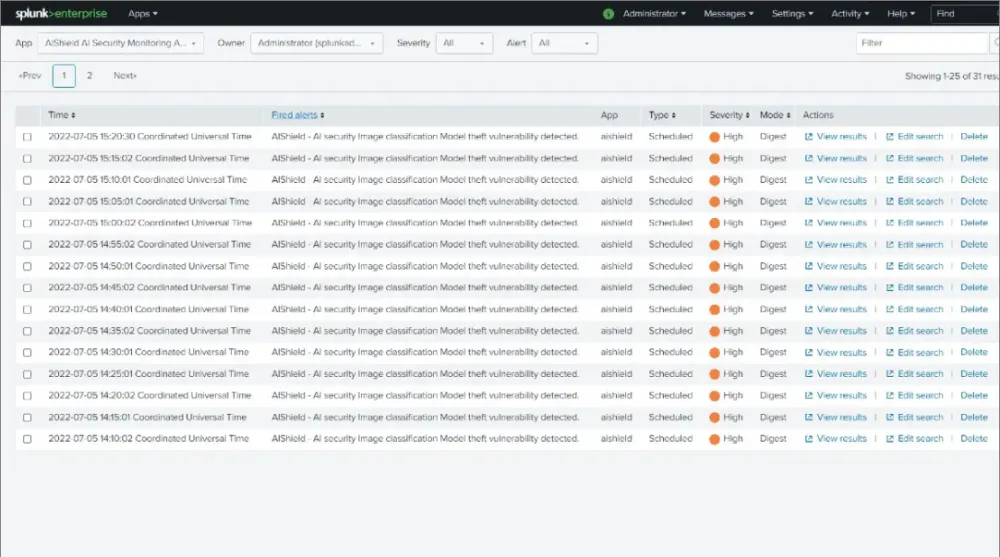

Incident Response: The platform provides endpoint protection, intrusion prevention, and attack data delivery to SIEM/SOAR systems such as Splunk and Microsoft Sentinel.

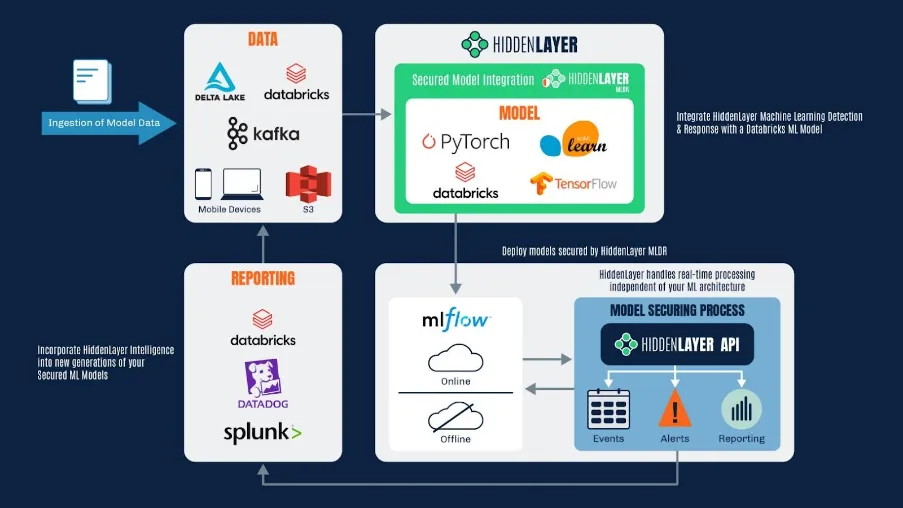

HiddenLayer MLDR

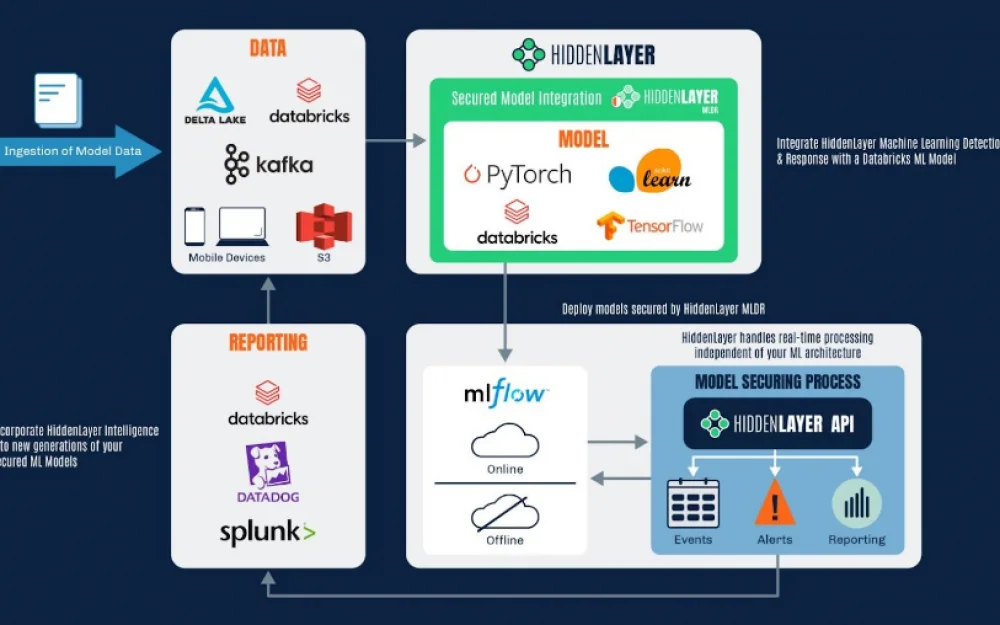

HiddenLayer MLDR is a first-of-its-kind cybersecurity platform for monitoring, detecting, and responding to attacks targeting ML models. HiddenLayer technology is non-invasive and does not require changes to models' data or performance, ensuring the confidentiality and security of the company's intellectual property.

The main capabilities of HiddenLayer MLDR include:

Integration with MITRE ATLAS: HiddenLayer MLDR supports integration with MITRE ATLAS, allowing the detection of attacks on ML models using more than 64 tactics and techniques.

Protection against inference attacks: The platform protects models from inversion attacks aimed at recovering original data from model outputs.

Protection against model manipulation: HiddenLayer MLDR enables detection and prevention of attempts to alter the model through manipulation of input data.

Protection against data poisoning: The platform prevents model poisoning, where attackers attempt to alter the model by introducing maliciously crafted data.

Protection against model theft: HiddenLayer MLDR protects the company's intellectual property by preventing attempts to extract or steal the model.

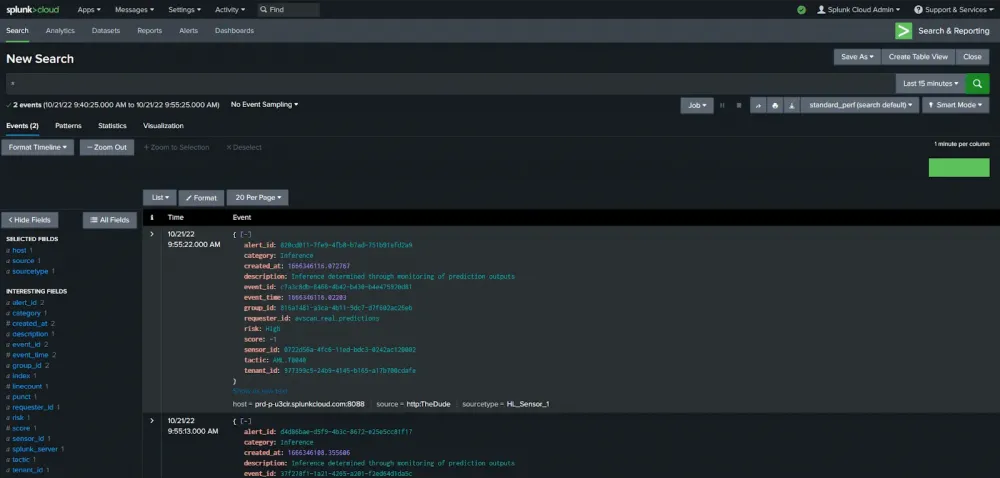

Integration with SIEMs such as Splunk and DataDog.

This product offers the following measures to respond to attacks:

Limit or block access to a specific model or query

Change classification scores to prevent probing of the model's error function gradients or decision boundaries

Redirect traffic to handle ongoing attacks

Involve a human in the triage and response process

Market Overview and Key Players

The market for ML protection platforms is rapidly developing, with a variety of solutions targeting different aspects of security. Let’s explore the main categories of solutions and key companies working in each of them.

Governance

AI security governance involves developing and implementing frameworks, policies, and procedures to ensure ethical, responsible, and secure use of AI technologies. Key players in this category include:

AI Ethics Compliance Platforms: Platforms for ensuring compliance with ethical standards and regulatory requirements for AI.

AI Risk Assessment Solutions: Software solutions for assessing and managing risks associated with AI deployment.

Privacy and Bias Auditing Tools: Tools for auditing and addressing privacy and bias issues in AI.

Key companies: Cranium, CredoAI, Arklow, HiddenLayer, ProtectAI.

Observability

Observability in AI security is the ability to monitor and understand the internal state of AI systems. It includes transparency in decision-making processes, understanding model behavior, and tracking its performance:

AI Monitoring Tools: Tools providing real-time data on AI system performance and health.

Explainability Interfaces: Platforms offering understandable explanations of AI decisions for users and stakeholders.

AI Anomaly Detection Systems: Solutions for detecting unusual patterns or behavior in AI operations that may indicate potential security issues.

Key companies: Humanloop, Helicone, CalypsoAI, Credal.ai, Flow.

Model Consumption Security

Detecting and responding to threats targeting deployed AI models is a key security task:

AI Intrusion Detection Systems: Systems for detecting intrusions and automatically responding to threats directed at AI.

Automated Response Solutions: Automated solutions for responding to AI-specific threats.

Key companies: HiddenLayer, Lasso Security, Bosch AI Shield, CalypsoAI.

AI Firewall

Filtering malicious input data and queries to protect AI systems:

AI-Powered Firewalls: Firewalls using AI to filter suspicious data.

Input Validation Tools: Tools for validating and filtering input data.

Key companies: CalypsoAI, Robust Intelligence, Troj.ai.

Continuous Red Teaming

Continuous attack simulations on AI systems to identify vulnerabilities:

Automated Red Teaming Platforms: Platforms for automated attacks on AI systems.

Continuous Security Assessment Tools: Tools for continuous security assessment.

Key companies: Adversa, Robust Intelligence, Lakera.

Data Leak Protection

Preventing unauthorized access and extraction of confidential data used by AI systems:

AI Data Loss Prevention (DLP) Solutions: Solutions for preventing data leaks in AI.

Secure Data Sharing Platforms: Platforms for secure data sharing.

Key companies: Nightfall, PrivateAI, Lakera.

Model Building & Serving

Monitoring and scanning vulnerabilities in AI development environments:

AI Code Scanners: Tools for scanning AI model code.

Vulnerability Management Tools for AI Pipelines: Tools for managing vulnerabilities in AI development pipelines.

Key companies: ProtectAI, HiddenLayer, Robust Intelligence, Giskard, Troj.ai, Bosch AI Shield.

PII Identification/Redaction

Detecting and protecting personal data in AI datasets:

Automated PII Detection & Redaction Tools: Tools for automated detection and redaction of personal data.

Foreign Regulatory Framework

AI Risk Management Framework | NIST

The AI risk management framework developed by the U.S. National Institute of Standards and Technology (NIST) provides recommendations for identifying, assessing, and mitigating risks associated with the deployment of AI systems.

The Act Texts | EU Artificial Intelligence Act

EU Artificial Intelligence Act establishes strict requirements for the development and use of AI systems in the European Union. The law categorizes AI systems into three groups: prohibited, high-risk, and others. Entered into force on August 1, 2024.

Prohibited AI Systems (Title II, Art. 5):

Use of manipulative or deceptive techniques to distort behavior and decision-making.

Exploitation of vulnerabilities related to age, disability, or socio-economic status.

Biometric categorization, determining sensitive characteristics of individuals.

Social scoring, risk assessment of committing crimes based on profiling.

Compilation of facial recognition databases through unsolicited collection of images from the internet or CCTV.

Emotion recognition in workplace or educational institutions.

Remote biometric identification in real-time (RBI) in public places for law enforcement, except in cases of searching for missing persons, preventing significant life-threatening threats or terrorist attacks, and identifying suspects in serious crimes.

High-Risk AI Systems (Title III):

Use of AI as a component of security or product requiring third-party evaluation for compliance.

Systems profiling individuals to assess various aspects of their lives.

Requirements for Providers of High-Risk AI Systems (Art. 8-25):

Implementation of a risk management system throughout the lifecycle of the system.

Ensuring data quality for training, validation, and testing of models.

Preparation of technical documentation to demonstrate compliance with requirements.

Designing systems with the capability to automatically record events and significant modifications.

Providing instructions for end-users to comply with requirements.

Developing systems with the capability to implement human oversight and ensure accuracy, reliability, and cybersecurity.

Implementing a quality management system to ensure compliance.

Conclusion

The market for platforms protecting machine learning is rapidly evolving, responding to growing security threats and regulatory requirements. Leading vendors such as Bosch AI Shield and HiddenLayer MLDR offer comprehensive solutions providing multi-layered protection for ML models from various types of attacks. However, effective AI protection requires not only the implementation of technical solutions but also a comprehensive approach that includes risk management, regulatory compliance, and continuous model monitoring. Companies investing in the protection of their AI systems gain significant advantages, ensuring data security, intellectual property protection, and business process reliability.

The platform provides extensive customization capabilities, enabling the implementation of some of the listed measures and systems to counter machine learning security risks. For example, based on our products, a tool for monitoring the input and output data of models, detecting dangerous patterns and anomalies in them can be created. Response measures, similar to, for example, HL MLDR, allow for the implementation and use of our attack countermeasures and incident management solutions — SOAR 2.0 or NG SOAR.

![From Virtual Hands to AI for Survivalists: Curious Open Agent OSes [and One Hardware Project]](https://cdn.tekkix.com/imgs/2026/05/habrcom/big/ce0b1057616faed51cd8b9f3b2b9.webp)

Write comment